All the technical innovation and confusing marketing around “big data” should leave any manufacturing engineer wondering just what big data means to them. Process manufacturing plants were, in fact, the original creator and user of big data. The digitization of these plants started in the 1970s and led the general recognition of the big data phenomena by decades.

For example, OSIsoft’s PI, one of the first process plant historians capable of storing large amounts of data, was released in the early 1980s. This predated commercial big data technologies by more than 20 years. The first papers on the core components of Hadoop were released (by Google) in 2004, and the explosion of interest in big data didn’t occur until the last few years.

But despite the leading role of process plants in the creation of big data, meaningful use of big data still feels far away for most process engineers. They often feel separated from the data and the insights by IT departments, developers and data scientists. Many continue to rely on manual data manipulation in inadequate tools like spreadsheets, trapped in a low productivity experience and frustrated with the slow pace of insights.

Big Data Defined

Part of the perceived distance between process engineers and big data occurs because “big data” is used so loosely as both an umbrella term, and in three distinct contexts.

- Big data is the fact — the expansion in data volume spurred by ongoing reductions in the cost of data creation, collection and storage. When data was expensive, less of it was collected, and as generating and storing data has gotten cheaper, the quantity of data has grown in quantity and type. The numbers are staggering, with as more data stored in just minutes now as during multiple-year periods back in the 1960s.

- Big data is the technology — the innovation in solutions to manage, store and analyze this increasing volume of data. This includes new hardware architectures like horizontal scaling, new algorithms like MapReduce (the core of the Hadoop ecosystem), new specialists like data scientists, and new software platforms like the 100+ NoSQL database offerings. A brief reading of any technical publication will quickly show options and offerings greatly exceeding the grasp of all but the most committed observer.

- Finally, big data is the promise — the expectation of business executives that value will be created by combing the volume of big data with the technologies of big data to produce insights and improve business outcomes. This could also be the pressure of big data, the demand that business leaders “check the box” and show the organization is tapping big data to achieve better results. These expectations and market pressures cause many companies to store vast amounts of data without a clear idea of how to derive value from it.

Process Industry Challenges

There is a final challenge related to big data in the process industries, one mimicked to some degree in other industrial realms such as discrete part manufacturing.

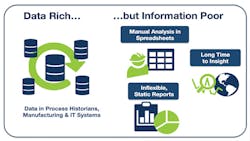

As ever more data is generated, there are fewer experts and resources available to inform and interpret the data. The retirement of seasoned engineers and the squeezing of budgets mean the big data equation in many industries is “more data, with increased demands for analysis and information, with fewer resources.” Or put another way, process and other manufacturing plants find they are data rich but information poor (Figure 1). Can the gap between engineers and data be closed, such that executives can start to see real results using the limited personnel resources on hand?

Many process and other manufacturing plants can’t find a feasible method to create usable information from the big data stored in their historians and other software platforms.

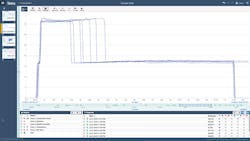

It can, if one assumption of big data is removed — the one that requires big data to be delivered via complex software platforms with accompanying heavyweight requirements regarding IT architecture, expertise and costs. This assumption is mostly based on the way technologies for the storage and analysis of big data volumes are currently developed and sold. This vendor-centric view does not concur with the way manufacturing companies would like to buy and implement these solutions, as they would prefer an evolutionary approach melding existing data with new software analysis tools in an IIoT implementation. (Figure 2 depicts such a solution, with existing control and monitoring data analyzed by a browser-based software solution.)

New solutions will layer data analytics on top of existing IT and OT architectures, instead of creating entirely new on premises platforms.

Not surprisingly, engineers and executives want the experience of the commercial world, where big data enables solutions that are used every day by millions of customers in all kinds of intuitive, interactive experiences. These solutions separate the technology from the user experience to deliver results to those who know just what information they want from the data, without requiring them to become data scientists or programmers.

Examples are voice recognition on smartphones, Google Search on a variety of platforms, GPS technologies to guide drivers to destinations, and many others. The expectation that big data — in volume or technology — has to be delivered to the user in the form of a complex platform is thus proven wrong every day by consumers. But until now, these expectations have persisted in the experience of the process and manufacturing engineer.

Solutions are Here

This revolution — from complex big data platforms requiring technical specialists for implementation and interface, to an application experience for process engineers — is the transformative opportunity to put big data insight and analysis into the hands of production experts. The goal is to provide features to engineers, rather than platforms, so they can quickly and directly interact with data without acquiring or employing IT expertise.

The software innovations required to deliver on this promise are similar to those already in place in numerous commercial software apps and web-based tools:

- Accessibility via a browser or app to provide a web-based interface

- Usability by process experts and manufacturing engineers

- Lightweight deployment that does not require data duplication

- Designed for time series data analysis in process plant and other manufacturing applications

- Features that apply machine learning and other advanced algorithms to simplify analysis

- Interactive, visual representation of data and results (Figure 3)

- Ability to quickly iterate, and to combine one result with another

- Ease of collaboration with colleagues within and across companies

Information presented in a visual format allows engineers and other manufacturing experts to directly interact with data and gain quick insight.

Applications that deliver these features will finally enable process and other manufacturing engineers to quickly and easily interact directly with big data to create information which can be used to improve production processes. Empowering the engineer, the one who actually understands the production processes in-depth, is the game changing approach that will deliver on the promise of big data.

Michael Risse is a Vice President at Seeq Corporation, a company building innovative productivity applications for engineers and analysts that accelerate insights into industrial process data. He was formerly a consultant with big data platform and application companies, and prior to that worked with Microsoft for 20 years. A graduate of the University of Wisconsin at Madison, he lives in Seattle.