The AI Memory Tax: Every Manufacturer Will Pay for the AI Boom

Key Highlights

- AI workloads are now disproporitionately consuming the high-bandwith memory that manufacturers depend on to run their operations.

- DRAM prices have surged approixmately 172% year over year, and are projected to increase further.

- Manufacturers can audit their exosure risk in four steps outlined in this article.

- Once manufacturers have assesed their risk, the author details how they can take action.

When Dell’s COO told analysts in November that he had “never witnessed costs escalating at this rate,” he was not talking about tariffs or raw materials. He was talking about memory chips.

When Cisco’s CEO told Wall Street in February that the company was “revising contractual terms with channel partners and customers to address evolving component prices,” he was describing the same force.

Qualcomm’s CEO put it plainly on a February earnings call: Cost overruns in the smartphone business were “100% related to memory.”

Most manufacturing leaders have not connected these headlines to their own operations yet. They should.

The cause is straightforward. Three companies, Samsung, SK Hynix, and Micron, produce over 95% of the world’s DRAM (dynamic random access memory—common computer memory).

AI accelerators now require high-bandwidth memory that consumes three to four times the manufacturing capacity of standard chips per gigabyte. AI workloads are on track to absorb roughly 20% of global DRAM wafer output by the end of 2026.

The result: DRAM prices have surged approximately 172% year over year, and conventional DRAM prices were projected to jump another 90 to 95% in the first quarter of 2026 alone. No new fabs deliver meaningful output before 2028. That timeline is physics, not policy.

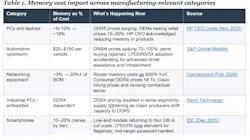

This is already showing up across manufacturing sectors. S&P Global Mobility projects automotive DRAM prices could spike 70 to 100% in 2026, warning of panic buying and production disruptions. Counterpoint Research reports that memory now accounts for more than 20% of the bill of materials in low-to-mid-end routers, up from roughly 3% year ago, with vendors passing costs to buyers.

In the industrial space, DDR4 pricing has doubled in some segments. Enterprise servers and network infrastructure are absorbing memory cost inflation of 10 to 20% or more. HP’s CEO acknowledged the company is putting less memory in products to manage costs.

Nobody has given manufacturing leaders outside the semiconductor industry a practical way to assess this risk. Here’s one.

A Memory Exposure Audit in Four Steps

Step 1: Map your memory footprint. Most executives think “memory” means the RAM in office PCs. The actual footprint is broader: the servers running your ERP, the PLCs on your factory floor, the switches in your network closet and the products you ship. Andrea Klein, CEO of distributor Rand Technology, calls memory “the number one bottleneck” in electronics manufacturing today. If your organization does not have a consolidated view of every system and product that depends on DRAM, you cannot assess your exposure.

Step 2: Assess concentration risk. Your DRAM supply is concentrated whether you realize it or not. The entire market runs through three producers, and if your supply chain touches any of them—even indirectly through component suppliers or contract manufacturers—your exposure is real. IDC describes the current shift as a “potentially permanent, strategic reallocation” of capacity toward AI. This is not a temporary cycle.

Step 3: Quantify the cost impact. Memory’s share of product cost is rarely a rounding error. Even a rough calculation—memory cost as a percentage of bill-of-materials (BOM) multiplied by the projected price increase—will show which product lines face margin compression first. The table below uses public data to illustrate the scale.

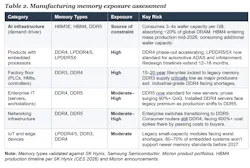

Step 4: Tier and prioritize. Cost impact tells you where the money is. The next step tells you where the risk is highest. Not all products and operations carry equal exposure. The table below classifies it by category. The first row, AI infrastructure, is the demand driver creating the constraint. The remaining rows represent the manufacturing systems and products that compete for what remains.

What To Do About It

Start with your contract manufacturers. If you outsource manufacturing, ask what your CM is paying for memory, where they are sourcing it, and what happens to your production schedule if their allocation gets cut. Most mid-size manufacturers cannot answer these questions today.

Extend procurement horizons. Companies that secured supply agreements 12 to 24 months in advance are significantly less exposed today. Some memory suppliers have signed contracts extending up to four years. If your procurement team reviews memory pricing quarterly, they are working on stale information. Quote validity windows have compressed from quarters to as little as seven days.

Engage at the design phase. For high-exposure products, memory availability should be a design constraint, not a purchasing problem discovered at production ramp. Rand Technology has warned that redesigning around a different memory type can cost millions and take 12 to 18 months.

One warning for companies still designing around DDR4: Manufacturers are actively winding down DDR4 production to free capacity for DDR5 and HBM. Micron exited its consumer Crucial brand entirely in December 2025. DDR4 spot prices have already exceeded DDR5 in some markets, an inversion unthinkable two years ago. The question is not whether to plan a DDR5 migration, but when.

Watch the leading indicators. Quarterly earnings from SK Hynix, Samsung and Micron signal where DRAM availability is heading before it shows up in your quotes. TrendForce and IDC publish regular supply-demand analyses that provide four- to six-month visibility. Track HBM4 production ramp-ups through mid-2026; as HBM4 enters mass production, it will consume additional wafer capacity, intensifying pressure on conventional DRAM.

There is a deeper constraint beneath this one. The advanced packaging technologies that integrate high-bandwidth memory into AI chips, such as TSMC’s CoWoS, are themselves fully booked through 2026 and concentrated in even fewer hands. That bottleneck is structural and unlikely to ease before 2028.

But you do not need to solve the advanced packaging problem. You need to solve your own. Most manufacturers have a material exposure to a supply chain they have not mapped, a pricing environment they have not modeled and a structural shift they are still treating as cyclical. Waiting for the cycle to pass is not a strategy. Understanding your own exposure is.

About the Author

Nikhil Vishnu Vadlamudi

Senior Manager, Business Operations, Intel Foundry

Nikhil Vishnu Vadlamudi works in semiconductor operations. His career spans the semiconductor value chain from equipment manufacturing (Applied Materials) to fabless operations (AMD) to foundry operations (Intel). He actively writes about emerging trends in semiconductor operations and AI infrastructure economics.