How We Stopped Babysitting Our Data and Got Faster at Ford

Key Takeaways for Industry Leaders

- Problem: Traditional on-premise frameworks often lack the "stretch" to handle sudden data surges.

- Fix: Transition to cloud architectures and leverage native cloud services to decouple and scale data processing.

- The Innovation: Move from sequential data saving to group-based batching to eliminate system "choke points."

- The Result: Faster insights for go-to-market strategies and less operational overhead for IT teams.

In today's rapidly evolving, competitive market, speed isn't just an advantage—it’s a survival trait. The large volume of operational data originating from manufacturing sites has become a maintenance burden. The data is good in terms of getting insight but also an infrastructure nightmare.

Manufacturing executives still using yesterday’s data to make today’s decisions are behind the curve. A new shift towards cloud analytics is changing that, moving enterprises away from traditional hardware into the live-data era.

The Death of the "Framework Bottleneck"

For decades, companies have relied on traditional on-premises frameworks to run their enterprise applications, leading to maintenance, elasticity-related issues. Those issues include:

High operational overhead: The constant need for manual hardware patching, cooling, and physical security.

Rigid infrastructure: The inability to quickly scale computing power up or down based on real-time factory demand.

Capital inefficiency: Significant 'up-front' investment in hardware that often sits underutilized during normal operating hours.

Whenever there is a spike in data due to increase in sales or a marketing campaign, the traditional frameworks buckle under pressure.

Our recent research into processing large volumes of data identified a better way without disrupting the business process. By migrating enterprise applications to cloud platforms and leveraging native cloud services, companies can benefit from auto-scale capabilities—which automatically adjust infrastructure capacity to match real-time demand—and reduced monitoring and maintenance activities. (Autoscaling is a dynamic resource management tool that automatically adjusts infrastructure capacity to match real-time demand, optimizing cost and ensuring continuous service availability during peak volumes without manual intervention.)

Cloud platforms offer a better way to process high volumes of information that works completely in the background, so there’s zero impact on day-to-day business operations. They dynamically scale and process data in real-time, ensuring the system handles peak volumes instantly while maintaining cost-efficiency and operational stability.

Why the C-Suite Should Care

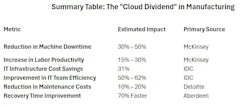

This isn't just an "IT project." This is a strategic pivot that impacts the bottom line in three specific ways:

Real-time agility: When C-level executives can see sales volumes and commissions in real-time, they can pivot. Whether it’s adjusting product pricing on the fly or reallocating a marketing budget mid-campaign, live data allows for surgical precision in a volatile market.

Ending the maintenance drain: Managed cloud services can handle the "heavy lifting" of scaling and global replication. (Global replication is the process of automatically copying and syncing your data across multiple locations worldwide so that it is always available, fast for local users, and backed up if one site fails.) This frees up your high-value technical teams to focus on innovation and go-to-market strategies rather than manual system maintenance.

Solving the persistence problem: One of our most significant findings involved the "persistence layer"—how data is saved to the system. By replacing old-school sequential saving with a group-based approach, we’ve seen a massive reduction in input/output bottlenecks. In plain English: the system stays fast, even when the data volume gets huge.

The Bottom Line

The transition to cloud-native architecture is a critical evolution for the modern enterprise. It bridges the gap between the technical "engine room" and the executive "cockpit."

By capitalizing on managed real-time data flows and a refined storage strategy, organizations aren't just processing numbers faster; they are building a scalable, global infrastructure that provides the high-fidelity insights required for agile decision-making.

About the Author

Nagadithya Nookala

Technical Product Manager, Ford Motor Co.

Nagadithya Nookala leads full-stack and cloud-based AI product development with extensive experience in modernizing manufacturing operations through 13 years spent delivering advance analytics solutions using data-driven approach.

Sanjay Ahire

Data Scientist, Ford Motor Co.

Sanjay Ahire, data scientist, Ford Motor Co., is a results-oriented leader with over 15 years of experience in data science and manufacturing process engineering. He has delivered complex global programs across the Aerospace and Automotive sectors, translating business strategy into impactful technology solutions.